I wrote this script to load all the Knowledge base Articles or Answers published in Oracle Service Cloud, parse and extract URL’s for further analysis (links to Migrated sites etc).

Use a recently cloned test site to run this script.

This sample script covers the following scenarios

- Authentication

- Executing an Oracle Service Cloud Report

- Extracting Json formatted report output

- Parsing the output

- Loading a HTML document (Answer) from a URL

- Extract URL’s using BeautifulSoup

- Create a CSV for Analysis

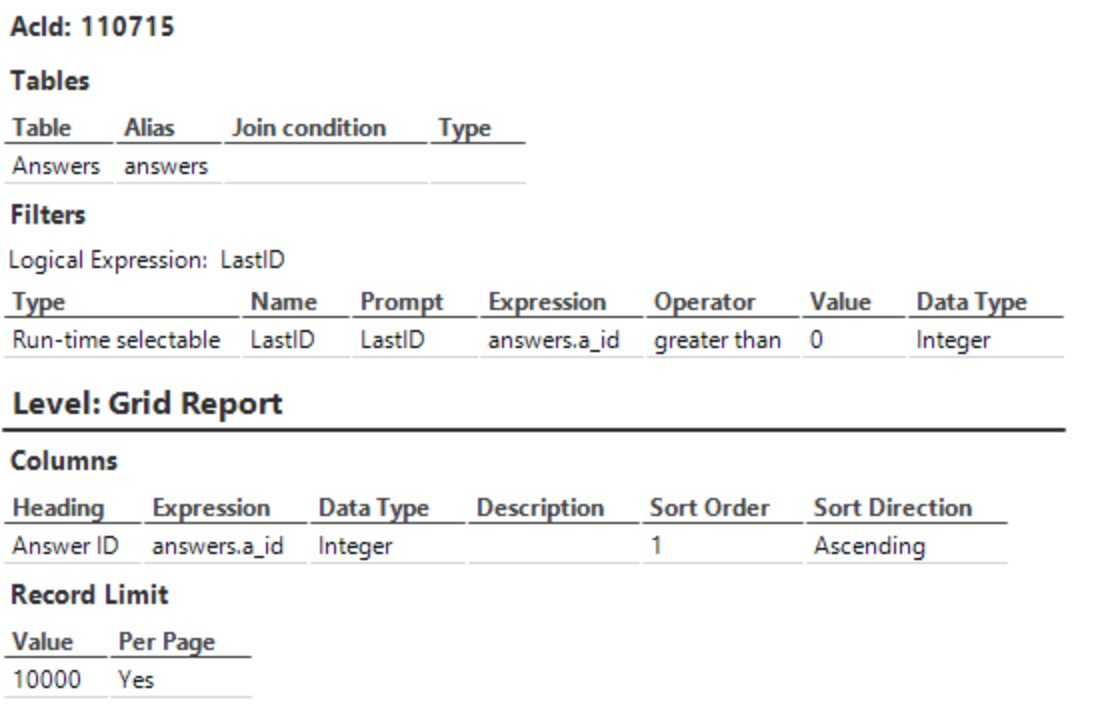

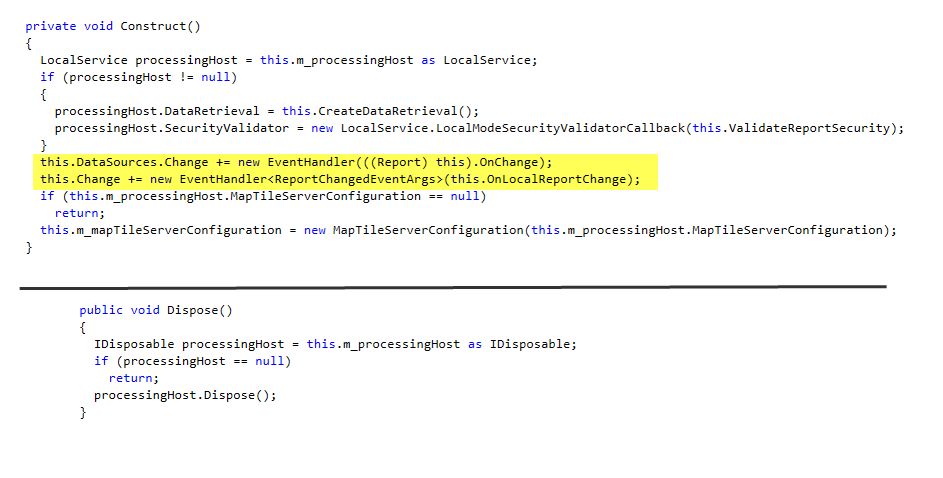

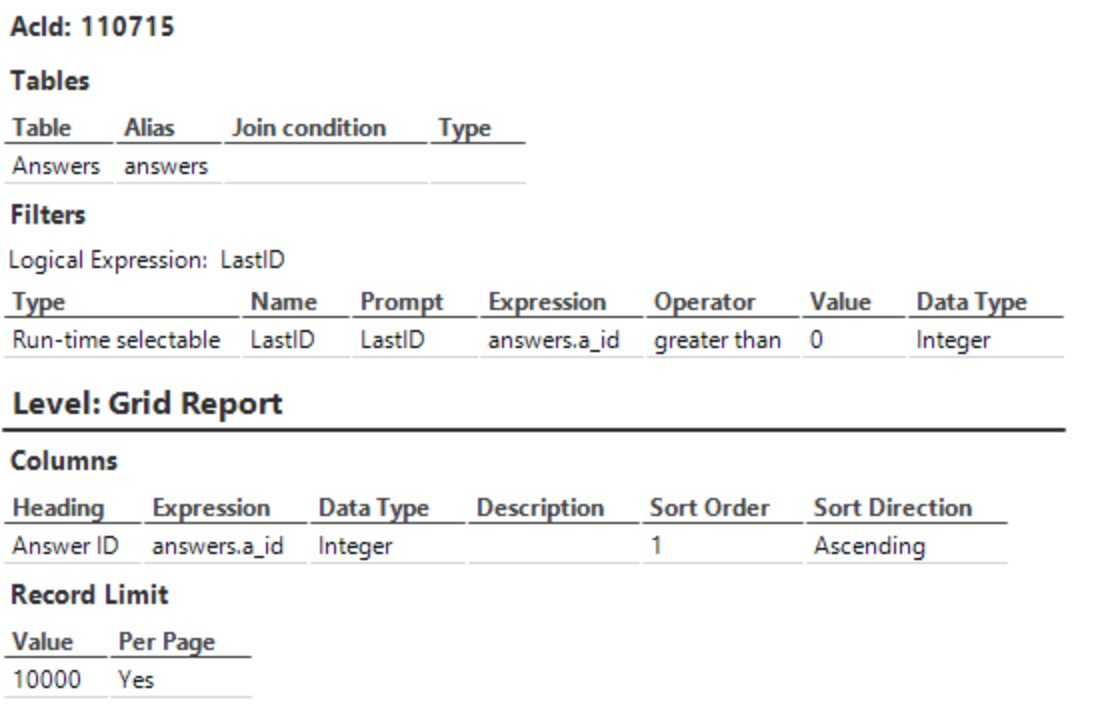

We need a list of Answers Id’s to work with. A report was created to expose all the Answer ID’s (Public). LastID is a custom filter for the answers.a_id (AnswerId) column. By Default this report return all answers id > 0. The report is sorted in Ascending Order by answers.a_id. Save this report with necessary execute permissions for the profile used by the Integration account used in the Script. Write down the Report ID, we will need this to modify the GetAnswerIDs function.

GetAnswerIDs(LastID) function

The Script connects to Oracle Service Cloud and executes a report. The report returns Json data. 0 is passed to LastID when this function is called the first time. The report will then return the first 1000 record (or as limited per call), returning answers that are greater than the last Answer Id. Subsequent calls to this function will pass the last Answer Id (see use of variable “gLastAnswerId”). This provides a mechanism to recursively call the report and load incremental data using the Answer Id as a Filter.

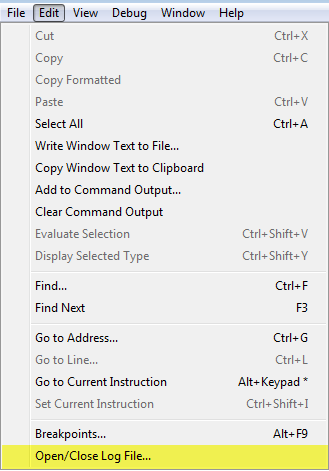

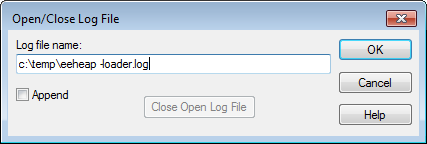

To get this script to work, you have to modify some of the variable.

Modify the “Url” variable and replace “your-domain” with the correct domain.

Update the “data” variable with the correct id (replace 110715 with the report id saved from the report that was created earlier)

Provide valid credentials using the OSCUserName and OSCPassword variables. This is case sensitive and the account should have necessary permissions to execute the Analytics Report API.

Continuing from while (gContinue):

Let’s continue with the rest of the Script.

Experiment with json.dumps(gData, indent=4)) to pretty print Json responses. Some comments are intentionally left to assist with understanding the script better.

Modify the variable “answerUrl” and replace “your-domain” with the correct Url to point to your KBA.

The Answer Id is then used to build a URL. The Url is then used to download the Answer from the public facing UR. We could have fetched actual field that stores the Answer or Summary. The Url based approach is better if the answer uses Answer Variables / substitution variables or dynamic content as in my case. The HTML is then parsed using BeautifulSoup library.

The KB section was embedded within a particular DIV tag with a Unique class identifier. You may have to change the for loop below to identify html tags by Id or Class depending on your site’s HTML content.

The for loop below finds these sections

for section in soup.find_all('div', {"class":"positioner"}):

The line below is to avoid any Relative URL’s from being picked up.

if (url.startswith('http')):

import requests

from bs4 import BeautifulSoup

from io import BytesIO

import base64

import json

from urllib.parse import urlparse

from urllib.parse import unquote

import csv

global gResponse, gData, writer, gContinue, gLastAnswerId

gContinue = True

gLastAnswerId = 0

def LoadResponse(Url):

response = requests.get(Url)

return response

def GetAnswerIDs(LastID):

Url = r"https://your-domain/services/rest/connect/v1.4/analyticsReportResults/"

data = {"id":110715,"filters":[{"name":"LastID","values":"{}".format(LastID)}]}

OSCUserName = "USERNAME";

OSCPassword = "PASSWORD";

credentials = r"{}:{}".format(OSCUserName, OSCPassword);

base64_bytes = base64.b64encode(credentials.encode('ascii'))

Authorization = base64_bytes.decode("ascii");

headers = {"Content-type":"application/json",

"Authorization":"Basic {}".format(Authorization),

"OSvC-CREST-Application-Context":"App",

"OSvC-CREST-Suppress-Rules":"true"};

response = requests.post (Url, json=data, headers=headers)

return response

#declare all functions above this line

print ("Starting...")

while (gContinue):

gContinue = False

print ("Loading Answers > {}".format(gLastAnswerId))

gResponse = GetAnswerIDs(gLastAnswerId)

gData = gResponse.json()

#print (json.dumps(gData, indent=4))

with open("urls.csv", "w") as writer:

writer.write("Answer ID, KB Link, Host Name, Url,Display Text, Scheme, Path, Query String\n")

#writer = csv.writer(file, delimiter=',')

for r in gData["rows"]:

gContinue = True; #if we find records, we may have to loop this once more...

answerID = str(r[0])

print ("Processing Answer Id : {}".format(answerID))

if int(answerID) > int(gLastAnswerId):

gLastAnswerId = answerID

answerUrl = r"https://your-domain.custhelp.com/app/answers/detail/a_id/{}".format(answerID)

print (answerUrl)

response = LoadResponse(answerUrl)

soup = BeautifulSoup(response.text, "html.parser")

for section in soup.find_all('div', {"class":"positioner"}): #KB content is within this element + Class. Helps to avoid the header and footer

for a in soup.find_all('a', href=True):

url = a['href']

url = unquote(url)

if (url.startswith('http')):

#print (url)

o = urlparse(url)

writer.write("{},{},{},{},{},{},{}\n".format(answerID, answerUrl, o.hostname, url, a.string, o.scheme, o.path, o.query))

print ("Finished.")

Hope this was helpful and leave your comments below